Is your new AI-powered workflow feeling... well, a bit slow? Staring at a progress bar while generating images or waiting for a local language model to respond is a massive drag. You've got the cutting-edge software, but the experience is anything but fast. For many South Africans diving into AI, this is a frustrating reality. But don't worry, effective AI performance optimization is within reach, and you don't always need a complete overhaul to fix slow AI applications.

Let's get that performance dialled up. 🚀

Understanding Why Your AI Applications Are Slow

Before you can fix the problem, you need to know the cause. When an AI application feels sluggish, it's almost always a hardware bottleneck. Your PC is struggling to keep up with the intense computational demands. Think of it as trying to run a marathon in flip-flops... you'll get there eventually, but it won't be pretty.

The main culprits are usually:

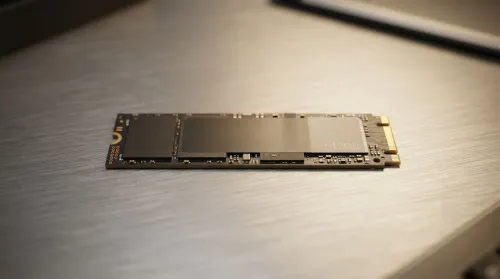

- GPU VRAM: The dedicated memory on your graphics card. Large AI models need a lot of it. If you run out, your system starts using slower system RAM, causing a massive performance drop.

- GPU Processing Power: The raw number-crunching ability of your graphics card, measured in things like CUDA cores (NVIDIA) or Compute Units (AMD).

- System RAM: While not as fast as VRAM, having plenty of system RAM is crucial for loading models and datasets.

- Software & Drivers: Outdated drivers or poorly configured software can leave performance on the table.

Hardware Upgrades for AI Performance Optimization

The most direct way to fix slow AI applications is by ensuring your hardware is up to the task. This is where the magic really happens.

The GPU: Your AI Engine ⚡

Your Graphics Processing Unit (GPU) is the single most important component for local AI tasks. For years, NVIDIA has been the top choice due to its mature CUDA software ecosystem, which most AI tools are built on. A rig with a powerful RTX 40-series card provides a fantastic foundation for any AI enthusiast. If you're serious about AI, exploring powerful NVIDIA GeForce gaming PCs is the best place to start.

However, the landscape is changing. AMD's recent advancements with their ROCm platform mean that their cards are becoming increasingly viable alternatives, often offering excellent performance for the price. For those looking for great value, checking out the latest AMD Radeon gaming rigs is a smart move.

Check Your VRAM Usage 🔧

Wondering if VRAM is your bottleneck? On Windows, open Task Manager (Ctrl+Shift+Esc), go to the 'Performance' tab, and click on your GPU. You'll see a 'Dedicated GPU Memory Usage' graph. If this is constantly maxed out while running your AI app, you've found your problem. It's a clear sign you need a card with more VRAM.

Beyond the GPU: RAM and Professional Setups

While the GPU does the heavy lifting, it doesn't work in a vacuum. If you're working with exceptionally large models or complex datasets that exceed your GPU's VRAM, your system's performance will depend heavily on having a large pool of fast system RAM (32GB is a good starting point, 64GB+ is better). For professionals or serious hobbyists who need uncompromising power and the ability to run multiple GPUs, standard gaming PCs might not be enough. In these cases, investing in purpose-built workstation PCs with massive RAM capacity and superior cooling is the logical next step for ultimate AI performance optimization.

Software Tweaks to Speed Up AI

Hardware isn't the whole story. You can often squeeze more performance out of your existing setup with a few simple software adjustments.

1. Update Your Graphics Drivers

This is the easiest step. Both NVIDIA and AMD regularly release driver updates that include performance improvements and bug fixes for AI and machine learning workloads. Make sure you're always on the latest version.

2. Use Optimised Software Versions

Many AI tools, like Stable Diffusion's Automatic1111 web UI, have specific command-line arguments or settings that can dramatically improve speed on certain GPUs. A quick search for "optimisation settings for [your GPU] in [your AI tool]" can uncover simple flags that unlock extra performance.

3. Consider Model Quantization

This is a more advanced technique, but it's incredibly effective. Quantization involves converting an AI model to use a lower-precision data format (e.g., from 32-bit to 16-bit or even 8-bit). The trade-off is a tiny, often unnoticeable, reduction in output quality for a massive boost in speed and a lower VRAM footprint. It’s a fantastic way to run larger models on less powerful hardware. ✨

By combining the right hardware with smart software settings, you can transform a frustratingly slow AI experience into a smooth, creative, and productive workflow.

Ready to Unleash AI Speed? Slow hardware is the biggest barrier to exploring the world of AI. If you're ready to stop waiting and start creating, Evetech has the power you need. Explore our range of high-performance Workstation PCs and build the ultimate machine to conquer any AI task.