Article (MDX)

Your gaming rig is already a beast... but did you know it could also be your personal AI powerhouse? Forget waiting for cloud servers. We're talking about running powerful Large Language Models (LLMs) like Llama 3 or Stable Diffusion right here in Mzansi, on your own machine. Finding the best PC for LLM tasks isn't just for data scientists anymore; it's the next frontier for gamers, creators, and power users who want total control.

Why a Gaming PC is the Best PC for LLM

You might be wondering why your gaming PC is suddenly the talk of the AI town. The answer is simple: the very components that deliver mind-blowing frame rates in Cyberpunk 2077 are the same ones that excel at the complex calculations needed for artificial intelligence.

At the heart of it all is the Graphics Processing Unit (GPU). Modern GPUs are parallel processing monsters, designed to handle thousands of tasks simultaneously. For gaming, that means rendering realistic lighting and textures. For AI, it means processing the enormous datasets that make LLMs tick. This synergy makes today's top gaming rigs for local AI incredibly effective and value-packed.

The Core Components for Your Local AI Rig

Building or choosing the best PC for LLM comes down to prioritising a few key components. While a balanced system is always ideal, for local AI, some parts carry much more weight than others.

The GPU: VRAM is King 👑

For running LLMs, the most critical specification on your GPU isn't its clock speed… it's the amount of Video RAM (VRAM). Think of VRAM as the dedicated workspace for the AI model. The larger the model you want to run, the more VRAM you need to load it.

- 12GB VRAM: A great starting point for experimenting with smaller, optimised models.

- 16GB VRAM: The sweet spot for running popular and powerful models efficiently.

- 24GB+ VRAM: Essential for developers, researchers, or anyone serious about training or running the largest open-source models without compromises.

Currently, NVIDIA holds a significant advantage with its CUDA technology, which is the most mature and widely supported platform for AI applications. That makes a high-VRAM GeForce card a top choice for anyone building a PC for running LLMs. For a solid foundation, check out Evetech's range of powerful NVIDIA GeForce gaming PCs.

Easy AI Setup ⚡

Want to run LLMs without complex command-line setups? Tools like LM Studio or Ollama provide a simple graphical interface. You can download, manage, and chat with various open-source models in just a few clicks, turning your gaming PC into an AI chat beast instantly.

What About AMD?

Team Red is absolutely a contender, especially from a price-to-performance perspective. AMD's ROCm software is their answer to CUDA, and it's rapidly improving. While software support can sometimes lag behind NVIDIA, a high-VRAM Radeon card can offer incredible value for your local AI build. If you're on a budget or enjoy tinkering, exploring AMD Radeon gaming PCs is a smart move.

CPU, RAM, and Storage

While the GPU does the heavy lifting, the rest of your system needs to keep up.

- System RAM: Aim for at least 32GB. When the GPU's VRAM is full, the system will use your regular RAM, so having plenty prevents bottlenecks.

- CPU: A modern multi-core CPU (like an Intel Core i5/i7 or AMD Ryzen 5/7) is more than enough. The CPU's job is mainly to prepare data for the GPU.

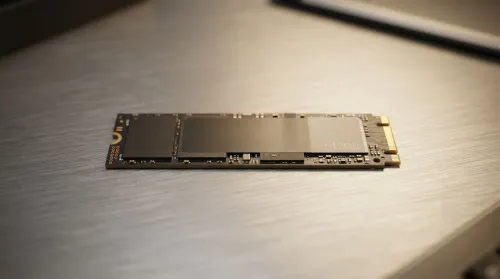

- Storage: A fast NVMe SSD is non-negotiable. AI models are huge files, often 10GB to over 100GB in size, and an SSD ensures they load in seconds, not minutes.

From Gaming Beast to AI Workstation 🚀

For most enthusiasts, a high-end gaming PC is the perfect machine for local AI. But what if you're a developer, a 3D artist, or a researcher needing to run multiple massive models or perform custom training? That's when it's time to consider a purpose-built machine. Professional workstation PCs offer options for multiple GPUs, massive amounts of RAM, and components certified for 24/7 reliability, providing the ultimate platform for serious AI development.

Ultimately, the best PC for LLM is the one that matches your ambition. Whether you're a curious gamer or a professional developer, the hardware to unlock the power of local AI is more accessible than ever before.

Ready to Build Your Local AI Powerhouse? The world of local AI is exploding, and your gaming PC is the perfect ticket in. From tinkering with models to boosting your productivity, the right hardware is key. Explore our customisable gaming PCs and configure the perfect rig for your AI ambitions.