Artificial Intelligence is no longer just for massive tech labs. Whether you are a computer science student in Pretoria or a local tech hobbyist, you might be eyeing Nvidia's upcoming entry-level GPUs. But can the RTX 5050 run AI and machine learning workloads effectively? Let us break down exactly what this budget-friendly card can handle... and where it might hit a wall.

The Architecture Behind the RTX 5050 ⚡

Nvidia's 50-series brings the new Blackwell architecture to the masses. For AI enthusiasts, the most important feature is the inclusion of upgraded Tensor Cores. These dedicated silicon blocks accelerate the matrix math required for neural networks. This means the RTX 5050 is fundamentally equipped to handle AI tasks. If you are looking at standalone graphics cards on a strict ZAR budget, this entry-level option offers a highly modern feature set.

VRAM... The Ultimate AI Bottleneck

While the processing power is there, machine learning is notoriously hungry for memory. The RTX 5050 is expected to ship with limited VRAM... likely around 8GB. This is the critical factor when deciding if the RTX 5050 can run AI and machine learning workloads for your specific daily needs.

Training large language models from scratch is out of the question here. However, inferencing is a completely different story. Inferencing simply means running a pre-trained model to generate text or images. If you are shopping for modern laptops equipped with entry-level 50-series chips, you can definitely run smaller, highly optimised models locally.

AI Memory Pro Tip 🔧

When working with limited VRAM on budget GPUs, always use quantization. By running your models in 4-bit or 8-bit precision, you drastically reduce the memory footprint. This lets you run surprisingly capable LLMs locally without crashing your system.

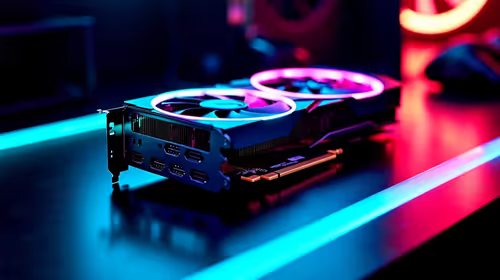

What AI Tasks Can You Actually Perform?

So, what does real-world performance look like? Here is what you can realistically expect from this tier of hardware.

Image Generation and Computer Vision

You can comfortably run Stable Diffusion for AI image generation. It will not be as blistering fast as a flagship card... but it will absolutely get the job done. It is a brilliant starting point for students who want to experiment before investing in premium pre-built desktop computers meant for heavy enterprise workloads.

Local LLMs and Data Science

Running local chatbots like Llama 3 is entirely possible if you use quantization. For traditional data science, you can use Nvidia RAPIDS to accelerate Pandas and Scikit-learn libraries. This makes data processing significantly faster than relying purely on your standard CPU.

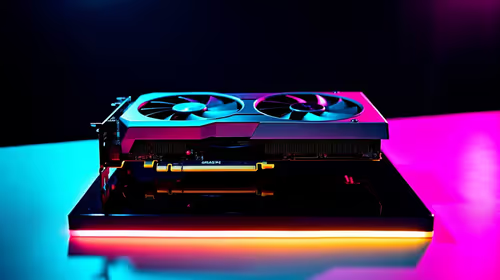

Making the Right Investment 🚀

If your primary goal is professional AI research, you will need far more horsepower and VRAM. You would be much better off exploring high-performance gaming PCs featuring higher-tier graphics cards. They offer the necessary memory bandwidth required for serious machine learning.

However, if you want a versatile machine for 1080p gaming that can also dabble in AI coding, the 5050 is a solid entry point. It allows South African creators to learn the ropes of machine learning without spending R30,000 on a GPU alone. Keep an eye on our tech specials to grab the best deals when building your starter AI rig.

Ready to Build Your AI Workstation? Whether you are training neural networks or just want the best frame rates in your favourite games, you need the right hardware. Explore our massive range of PC components and upgrades to find the perfect match for your budget and workload.