AI is everywhere. From ChatGPT writing your emails to the incredible graphics in the latest games, it’s changing how we work and play. But as a South African, a crucial question arises: should you rely on cloud services, or invest in your own hardware? The debate over cloud vs local AI processing isn't just about tech... it's about speed, privacy, and control, especially with our unique internet challenges. Let's break down what you really need. 🇿🇦

Understanding the AI Processing Showdown

At its core, the choice is simple: does the heavy lifting happen on a massive server farm overseas (the cloud), or on the PC sitting on your desk (local)? Both have their place, but the differences are massive.

Cloud AI: The Convenient Option

When you use services like ChatGPT or Midjourney, you're using cloud AI. You send a request, and a powerful remote server processes it and sends back the result.

- Pros: Requires no powerful hardware on your end. You can access it from almost any device with an internet connection.

- Cons: Often involves monthly subscription fees. You're sending your data to a third party, which can be a privacy concern. And the biggest issue for us in SA? It’s completely dependent on a fast, stable internet connection. Lag or an outage means your AI tool is useless.

Local AI: The Power Play

Local AI processing, or "edge computing," means running AI models directly on your own machine. Think of NVIDIA's DLSS technology boosting your frame rates or generating art with Stable Diffusion offline.

- Pros: It's faster, with zero latency. Your data stays with you, ensuring privacy. You own the hardware, so it's a one-time investment. Plus, it works perfectly during loadshedding if you have a UPS.

- Cons: It demands powerful hardware, specifically a modern graphics card (GPU).

When Local AI Processing Makes Sense in South Africa

For many South Africans, the argument for local processing is getting stronger every day. If you're a gamer, a content creator, or simply value your privacy and independence from dodgy internet, investing in the right hardware is the smart move.

Gaming is the most common example. Technologies like NVIDIA DLSS and AMD FSR use local AI to intelligently upscale images, giving you a massive performance boost. This isn't happening in the cloud; it's your GPU making you a better gamer in real-time. For serious content creators running AI-powered filters in video editors or developers training custom models, local processing isn't just nice... it's a necessity. 🚀

Check the VRAM! ⚡

Before diving into local AI image generation with models like Stable Diffusion, check the VRAM (video memory) requirements. Basic models run on GPUs with 6-8GB of VRAM, but for higher resolutions and more complex tasks, you'll want a card with 12GB, 16GB, or even more for the smoothest experience.

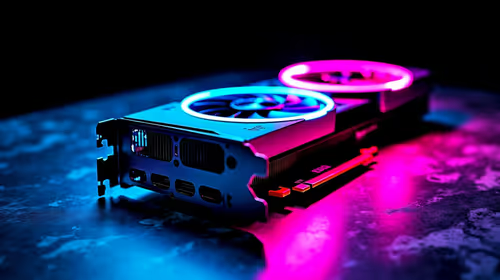

The Right Hardware for Local AI in SA

So, what kind of machine do you need to handle local AI processing? It all starts with the GPU. While a good CPU and at least 16GB of RAM are important, the graphics card does almost all the heavy lifting for AI tasks.

The GPU is King 👑

The parallel processing power that makes GPUs fantastic for gaming also makes them perfect for AI calculations. The undisputed leader in the AI space is NVIDIA, whose CUDA platform is the industry standard for machine learning. This gives their hardware a massive advantage in software support, making a system from our range of NVIDIA GeForce gaming PCs an excellent choice for both gaming and AI work.

However, don't count AMD out. They offer incredible performance-for-your-rand, and their ROCm software platform is rapidly improving. For gamers who want to dabble in AI, an AMD Radeon gaming PC provides a powerful and cost-effective solution.

Beyond the Game: Professional Power

If your AI ambitions go beyond gaming and basic content creation—into fields like data science, 3D architectural rendering, or running large language models—you might need to step up to a dedicated workstation. These machines are built for sustained, heavy workloads, often featuring GPUs with more VRAM, certified drivers for professional applications, and more robust cooling solutions. For the ultimate in local AI power, exploring purpose-built workstation PCs is the way to go.

The choice between cloud vs local AI processing in South Africa often comes down to your needs for speed, privacy, and reliability. While the cloud offers easy access, the power to run AI on your own terms, independent of internet issues, is a true advantage.

Ready to Harness Local AI Power? Whether you're dominating the latest AAA title or creating with next-gen tools, the right hardware is key. Explore our powerful range of custom-built gaming PCs and find the perfect machine to conquer your world.