Ever asked an AI model like DeepSeek to write code, only to watch the cursor blink... and blink? That frustrating pause is called 'inference', and it's where the AI 'thinks'. Here in South Africa, as more of us run these powerful tools locally, a critical question emerges: what hardware gives you the best DeepSeek inference speed? The answer lies in a classic tech showdown: the mighty GPU versus the trusty CPU. Let's dive in.

Understanding AI Inference Speed

Before we get to the benchmarks, what exactly is inference? Think of it as the AI's performance phase. After a model like DeepSeek has been trained on massive datasets (the heavy lifting done by developers), inference is the process of it using that knowledge to answer your prompt. Whether you're generating text, code, or images, faster inference means quicker results. ⚡

For local use, the DeepSeek inference speed on your machine directly impacts your workflow. A slow model breaks your creative flow, while a fast one feels like a seamless extension of your own thoughts.

The Hardware Showdown: GPU vs. CPU Performance

When running AI models, not all processors are created equal. Your choice between a Graphics Processing Unit (GPU) and a Central Processing Unit (CPU) is the single biggest factor affecting performance.

The CPU: Your Reliable Starting Point

Your computer's CPU is a master of sequential tasks, handling the core operations of your system with incredible efficiency. For running smaller AI models or for just experimenting, modern CPUs are surprisingly capable. Processors from both the latest Intel Core PC deals and the powerful AMD Ryzen PC deals can certainly run models like DeepSeek. The experience might be slower, with words generating one by one, but it's a perfectly valid and accessible way to start your AI journey.

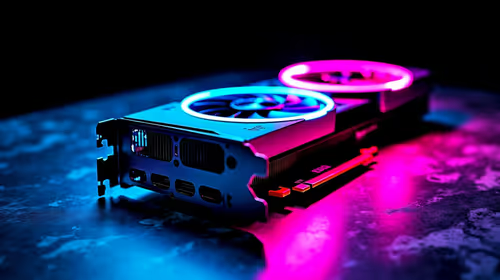

The GPU: The Undisputed Speed King 🚀

This is where things get exciting. GPUs are designed for parallel processing—handling thousands of simple calculations simultaneously. This architecture, originally for rendering graphics in games, is perfect for the complex matrix multiplication at the heart of AI models.

When you offload the task to a graphics card, the GPU vs CPU performance benchmarks show a massive difference. A decent GPU can increase DeepSeek inference speed by 10x, 20x, or even more. Instead of waiting for a sentence to form, you'll see entire paragraphs appear in seconds. High-performance NVIDIA GeForce gaming PCs are the industry standard for this, but both AMD Radeon gaming PCs and even newcomers like Intel's Arc series GPUs offer significant acceleration.

Check Your VRAM! 🧠

When choosing a GPU for AI, Video RAM (VRAM) is just as important as raw speed. Large language models need to be loaded into the GPU's memory to run. A model like DeepSeek's 7B version might need at least 8GB of VRAM to run smoothly. Bigger models need more. Always check the VRAM before you buy!

What Do the Performance Benchmarks Tell Us?

While exact numbers vary based on the specific model, drivers, and system configuration, the trend is crystal clear. Performance is often measured in 'tokens per second' (a token is roughly a word or part of a word).

- CPU Performance: You might see speeds of 2-10 tokens/second. Usable, but you'll be waiting.

- GPU Performance: A mid-range gaming GPU can easily hit 30-80 tokens/second. High-end cards and specialised workstation PCs can push well over 100 tokens/second.

The conclusion is simple: for the best DeepSeek inference speed, a dedicated GPU isn't just a nice-to-have; it's essential for any serious work.

So, What PC Is Right for You?

Choosing the right hardware comes down to your needs and budget.

- Just Curious? If you're only dabbling, your existing modern PC might be enough to get a taste.

- Budget-Conscious Hobbyist? Look towards budget-friendly gaming PCs. Even an entry-level dedicated GPU will provide a monumental speed boost over CPU-only inference.

- Serious Developer or Creator? Don't compromise. Investing in one of the best gaming PC deals with a high-VRAM NVIDIA or AMD card will pay for itself in saved time and reduced frustration. For ultimate convenience, exploring powerful pre-built PC deals can get you up and running in no time.

Ultimately, harnessing the power of local AI in South Africa is more accessible than ever. With the right machine, you can turn that blinking cursor into a torrent of creativity. ✨

Ready to Unleash AI Speed? Whether you're starting out or building a professional AI powerhouse, the right hardware is key. A powerful GPU will transform your DeepSeek experience from a crawl to a sprint. Explore our massive range of gaming and workstation PCs and find the perfect machine to accelerate your ideas.