Cloud AI computing costs are skyrocketing when you convert dollars to ZAR. If you want to run massive language models in South Africa, building a local rig is the smartest move. Wondering how to set up multiple GPUs for AI training at home? It is easier than you think... provided you get the hardware balance right. Let us dive into the essentials.

Choosing the Right Hardware for Multi-GPU AI 🚀

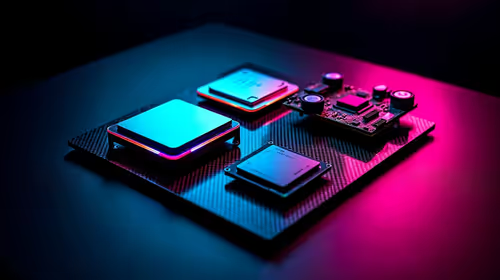

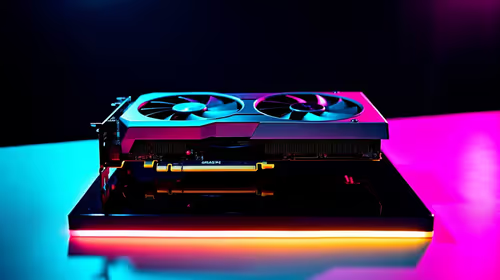

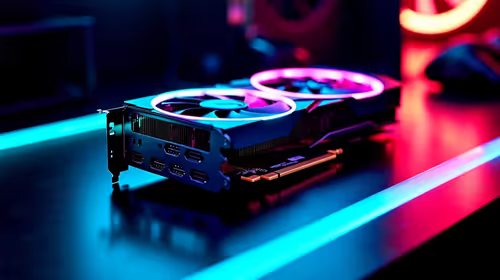

Artificial intelligence thrives on VRAM and CUDA cores. When you decide to set up multiple GPUs for AI training at home, your absolute first priority is selecting powerful graphics cards. Nvidia RTX 4090s are incredibly popular for deep learning because they offer a massive 24GB of VRAM. You need that memory buffer to load large datasets without crashing your system.

Motherboard and PCIe Lane Considerations

You cannot simply slot two massive cards into any random board. Your motherboard needs adequate physical spacing and enough PCIe lanes to prevent bottlenecking your data transfer. While many enthusiasts build from scratch, starting with one of our high-end custom gaming PCs can give you a solid foundation. Look for an X670E or Z790 motherboard to ensure maximum bandwidth.

Alternatively, if you want a workstation ready to go out of the box, exploring pre-built PC deals can save you the headache of cable management and hardware compatibility testing.

Power Supply Warning ⚡

Running dual GPUs requires massive power. Ensure you have at least a 1200W to 1600W 80+ Platinum power supply. A sudden power spike during a heavy machine learning epoch can trip a weaker PSU instantly.

Cooling Your Home AI Server 🔧

Heat is the enemy of sustained AI workloads. Two graphics cards stacked closely together will choke if airflow is poor. Opt for a full-tower chassis with high-static pressure fans to force cool air between the components.

Once your rig is built, you do not need to sit next to it. Many developers use high-performance laptops to SSH into their home server from the couch... keeping the noisy, heat-generating rig safely tucked away in a well-ventilated room.

Software Optimisation for Deep Learning

Once the hardware is assembled, install the latest Nvidia Studio drivers and the CUDA toolkit. Ubuntu is generally the preferred operating system for deep learning frameworks like PyTorch and TensorFlow.

Remember that fast storage is crucial to feed massive datasets to your system without delay. Keep an eye on our latest tech specials to grab high-speed NVMe Gen4 SSDs at a great ZAR price point.

Ready to Build Your AI Workstation? Training AI models locally saves you thousands in cloud fees over time. For maximum power, choice, and value in South Africa, Evetech is your ultimate hardware partner. Explore our massive range of graphics cards and find the perfect hardware to conquer your datasets.