So, you’ve seen the wild AI art, heard about ChatGPT writing essays, and maybe even tinkered with a local AI model yourself. Artificial intelligence isn't just for massive data centres anymore; it's right here on our desktops. But running these powerful tools requires serious muscle. If your PC starts to sweat just thinking about it, it’s time to consider a PC upgrade for AI. Let's break down the essential components you need for peak performance. 🧠

The GPU: Your AI Workhorse

When it comes to AI, the Graphics Processing Unit (GPU) is the undisputed king. This is where the magic happens. The parallel processing power that makes your games look incredible is the same power that crunches through complex AI calculations.

NVIDIA vs. AMD for AI

For years, NVIDIA has been the go-to for AI development, thanks to its CUDA (Compute Unified Device Architecture) platform and dedicated Tensor Cores. Most AI software and machine learning libraries are optimised for CUDA, giving NVIDIA a significant head start. If you're serious about AI, exploring one of our powerful NVIDIA GeForce gaming PCs is an excellent first step.

However, don't count AMD out. Their recent GPUs are incredibly powerful, and their ROCm software platform is gaining ground. For tasks that aren't strictly tied to CUDA, you can get amazing performance and value from Team Red. Many of our capable AMD Radeon gaming PCs are more than ready for AI workloads.

VRAM: The More, The Better

Video RAM (VRAM) is critical. It’s the memory on your graphics card where AI models and datasets are loaded. If you run out of VRAM, performance drops off a cliff. For generating high-resolution images or working with large language models, 12GB of VRAM is a good starting point, but 16GB or even 24GB is ideal for more demanding tasks.

Check Before You Buy 🔧

Before choosing a GPU, look up the VRAM requirements for the specific AI models you want to run (e.g., Stable Diffusion XL, Llama 3). A quick search for "Stable Diffusion vram requirements" will give you a realistic target and help you avoid buying a card that can't handle your dream projects.

CPU and RAM: The Supporting Cast

While the GPU handles the heavy lifting, a sluggish CPU or insufficient system RAM will create a bottleneck, slowing everything down. A proper PC upgrade for AI requires a balanced system.

- CPU (Central Processing Unit): You don't need the absolute best CPU on the market, but a modern processor with at least 6-8 cores is recommended. The CPU manages data preparation, loads files, and keeps the whole system running smoothly while the GPU is maxed out.

- RAM (Random Access Memory): 32GB of fast DDR4 or DDR5 RAM should be your baseline. AI tasks can be memory-intensive, especially when you're multitasking—training a model while browsing for tutorials, for instance.

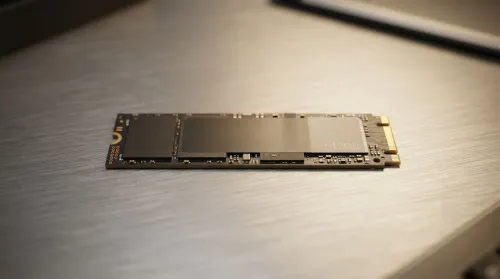

Storage and Power: The Foundation

Don't let your high-end components be held back by a slow hard drive or a weak power supply.

- Storage: A fast NVMe SSD is non-negotiable. Loading large datasets and AI models from a traditional hard drive is painfully slow. An NVMe drive will slash your loading times and make the entire system feel more responsive. A 1TB drive is a great start.

- PSU (Power Supply Unit): High-end GPUs are thirsty for power. Ensure you have a reliable, high-quality PSU from a reputable brand with enough wattage to comfortably power your entire system under load. Skimping here risks instability and can even damage your components. For guaranteed stability, our pre-configured professional workstation PCs are built with perfectly matched, high-quality components.

Building an AI-ready PC is about creating a balanced machine where no single part holds the others back. By focusing on a powerful GPU with plenty of VRAM and backing it up with a solid CPU, sufficient RAM, and fast storage, you'll be ready to explore the incredible world of artificial intelligence. 🚀

Ready to Build Your AI Powerhouse? A PC upgrade for AI can feel complex, but the performance gains are massive. Whether you're a creator, a developer, or just curious, having the right hardware is key. Use our advanced Custom PC Builder to configure the perfect AI machine for your needs and budget.