Tired of waiting for ChatGPT or hitting API limits? The real power of AI is running it yourself, right on your own machine. Building a dedicated PC for LLM (Large Language Model) tasks isn't just for data scientists anymore. It’s for creators, developers, and anyone in South Africa wanting total control and privacy. This guide will walk you through creating the ultimate local AI powerhouse, step-by-step. Let's get building! 🔧

Why a Custom PC Build for LLMs is a Smart Move

Running large language models in the cloud is convenient, but it comes with strings attached… like monthly fees and privacy concerns. A dedicated PC build for LLM work on your desk flips the script.

You get:

- Total Privacy: Your data and prompts never leave your hardware.

- No Costs Per Query: Once the PC is built, you can experiment endlessly without worrying about a rising bill.

- Unmatched Speed: Local processing eliminates internet latency for near-instant responses.

- Full Customisation: Fine-tune models on your own datasets for specialised tasks.

The Key Components for Your LLM PC Build 🧠

Building a computer for large language models is different from a standard gaming rig. While there's overlap, the priority shifts from frame rates to raw parallel processing power and memory bandwidth.

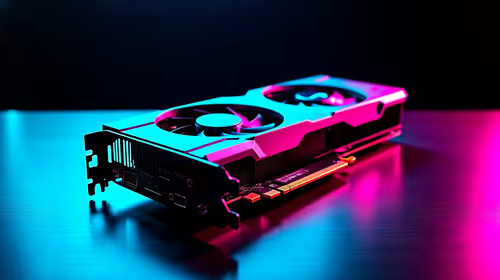

The GPU: VRAM is Your New Best Friend

The Graphics Processing Unit (GPU) does almost all the heavy lifting. For LLMs, one metric rules them all: Video RAM (VRAM). The more VRAM you have, the larger and more complex the models you can run.

NVIDIA is the undisputed champion here due to its CUDA software platform, which is the industry standard for AI. An RTX 4080 with 16GB or an RTX 4090 with 24GB of VRAM is an excellent starting point. A powerful GPU is the heart of any serious LLM PC build. For top-tier performance, exploring a pre-configured NVIDIA GeForce gaming PC can give you a perfectly balanced system right out of the box.

CPU, RAM, and Storage: The Supporting Cast

While the GPU is the star, the other components are crucial for a smooth-running system.

- CPU (Processor): You don't need the absolute best, but a modern processor with multiple cores (like an Intel Core i7 or AMD Ryzen 7) is vital for preparing data and keeping the whole system responsive.

- System RAM: Aim for at least 32GB of fast DDR5 RAM. If you plan on training models or handling massive datasets, 64GB or even 128GB is a worthwhile investment.

- Storage: A fast NVMe SSD is non-negotiable. Models can be huge (tens of gigabytes), and loading them from a slow drive will be a major bottleneck. A 2TB NVMe drive is a great starting point.

While NVIDIA dominates the AI space, AMD's hardware is becoming increasingly capable. For those looking at a more budget-conscious build or who are invested in the AMD ecosystem, certain high-end AMD Radeon gaming PCs offer impressive general performance that supports a wide range of tasks.

VRAM & Model Size ⚡

A model's size is measured in 'parameters'. A 7-billion (7B) parameter model like Llama 3 8B Instruct might need around 8GB of VRAM to run comfortably. A 70B model, however, will require much more… often needing 48GB+ of VRAM, which usually means using multiple GPUs or a professional-grade card. Always check the model's requirements before downloading!

Is a DIY Build the Right Path for You?

Assembling your own PC build for LLM tasks is incredibly rewarding. You get to hand-pick every component and understand your machine inside and out. However, it requires research, patience, and careful assembly to ensure all parts are compatible and performing optimally.

For many professionals and serious hobbyists in South Africa, the time and potential for error can be a significant drawback. If your goal is to get to work immediately with a system guaranteed to perform under heavy AI workloads, a pre-built machine is often the smarter choice. These systems are designed and tested for stability and performance, saving you the headache. For the most demanding AI and data science tasks, purpose-built Workstation PCs offer certified components and enterprise-grade reliability.

Ready to Power Your AI Dreams? 🚀 Building a specialised PC for LLMs is a serious undertaking. If you'd rather skip the assembly and get straight to innovating, Evetech has you covered. Explore our range of high-performance Workstation PCs, expertly configured and tested to handle the most demanding AI workloads right out of the box.