Starfield Max Settings on RTX 5070: Complete Guide

Starfield Max Settings on RTX 5070. Tested & verified settings for best FPS and visual quality on SA hardware budgets.

Read more128GB unified RAM can run large language models locally for many use cases, but model size, quantization, and context length decide how far it goes 🚀🤖 Learn what fits and what to upgrade.

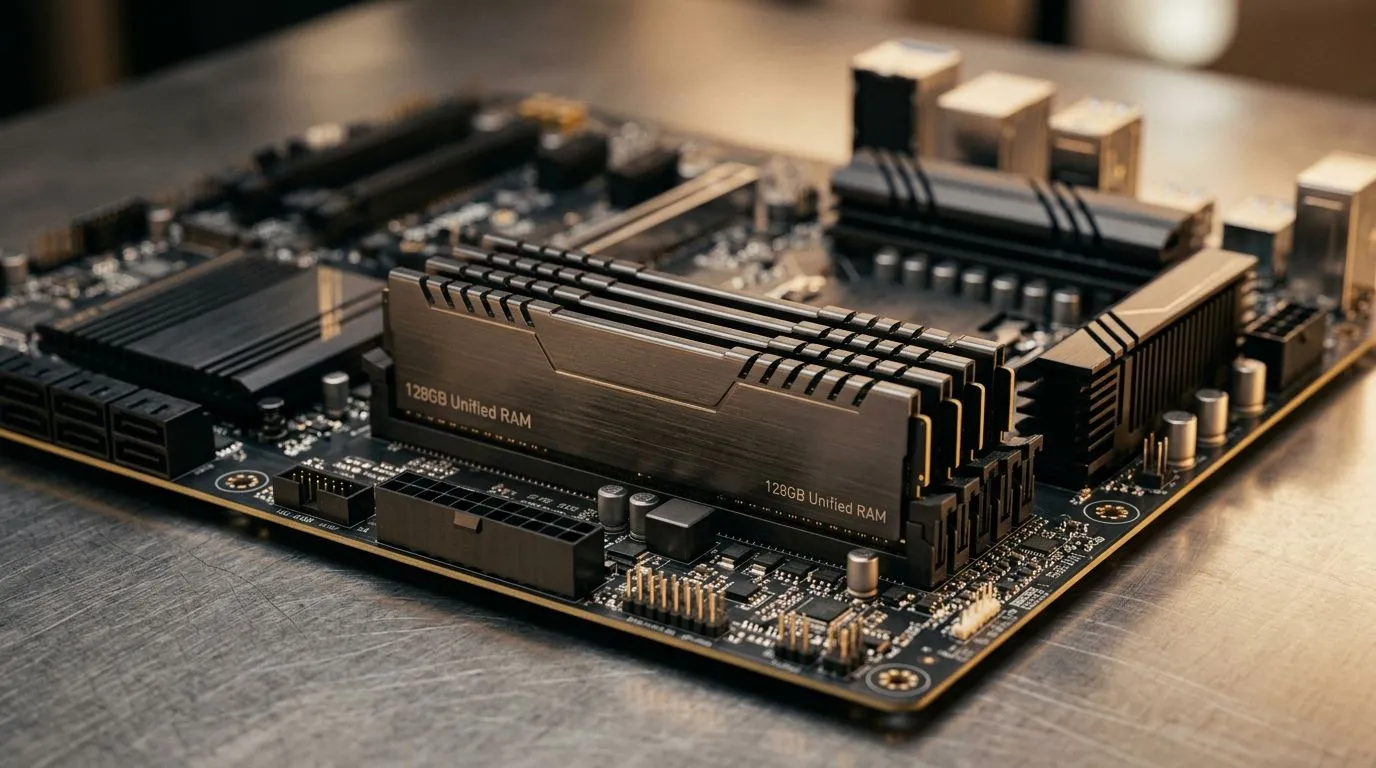

South African tech enthusiasts and gamers are buzzing over 128GB unified RAM—particularly when running large language models (LLMs). Is this amount truly enough to handle advanced AI workloads or demanding multitasking? From gaming to development, practical RAM capacity impacts speed and efficiency. Today, we’ll explore whether 128GB unified memory meets the needs of complex LLMs and where it fits in the SA tech landscape.

Unified RAM combines system and graphics memory into one shared pool, reducing bottlenecks. This helps accelerate data processing for LLMs, which demand fast, substantial memory access to train models or perform inference. 128GB unified RAM means large datasets and model parameters fit neatly, speeding up workflows without frequent swaps to slower drives.

For South African users eager to upgrade, mini PCs are a compact option with surprisingly powerful specs. Check out this selection of mini PCs at Evetech that offer configurations with high unified RAM suitability.

In real-world LLM applications, 128GB unified RAM covers most mid-sized to large models comfortably. However, ultra-large models with billions of parameters may push beyond this, requiring RAM upgrades or distributed computing setups. For developers or AI hobbyists, balance your budget and performance needs carefully.

South African creatives and coders benefit from compact gear like the Minisforum Mini PCs, which strike a great balance between power, size, and memory capacity.

Unified RAM improves memory bandwidth dramatically—close unnecessary apps to prioritise LLM processing. Also, consider a fast NVMe SSD to pair with your unified RAM for smoother data streaming.

The intersection of memory and hardware matters. When leaning on unified RAM, ensure your CPU and GPU complement it. MSI and Ninkear have solid mini PC offerings with integrated support for high-capacity unified RAM setups. Have a look at MSI’s mini PCs here or explore Ninkear’s range for that setup that matches your LLM ambitions.

Mini PCs simplify high-RAM usage in compact spaces. They’re ideal for South African content creators and tech buyers looking to experiment or develop AI with minimal desk clutter.

South Africa’s tech community thrives on balancing cost and capability. While 128GB unified RAM handles today’s large language models well, AI’s rapid evolution means demands will rise. Modular systems like these powerful mini PCs offer upgrade-friendly frameworks. This way, you stay ready to expand as your AI projects grow.

Ultimately, the sweet spot between sufficient RAM and overall system capability defines smooth LLM performance.

Elevate Your AI Setup Today Ready to power your AI and gaming experience? Shop now at Evetech for mini PCs configured with robust unified RAM and performance that leaves lag in the dust.

Yes, for many 7B to 34B models, especially with quantization. Very large models may still need more memory or lower settings.

It can often run 7B, 13B, and many 34B models locally. Some larger models work only with heavy quantization and shorter context.

Yes. Quantization lowers memory use, so 128GB unified RAM can handle larger LLMs and faster local inference.

Sometimes, but it depends on model format, quantization, and context length. 70B models may fit only in optimized setups.

Longer context uses more memory. Even with 128GB unified RAM, large context windows can push an LLM beyond comfortable limits.

Unified RAM offers efficient CPU and GPU sharing on supported systems, which can help local AI workloads, but capacity still matters most.

Choose a system with more memory, faster storage, and a stronger GPU or better accelerator if you want to run bigger LLMs locally.